There is something appealing about the organizational metaphor at the heart of CrewAI. You have a crew of agents, each with a defined role, backstory, and set of tools. They are assigned tasks, they collaborate, they produce outputs. It maps well onto how humans think about delegating work — which turns out to be both its greatest strength and its most significant limitation.

I have built production systems with CrewAI, evaluated it against LangGraph and AutoGen for specific use cases, and watched teams succeed and fail with it. Here is an honest account of where it excels and where you need to be careful.

The Conceptual Model

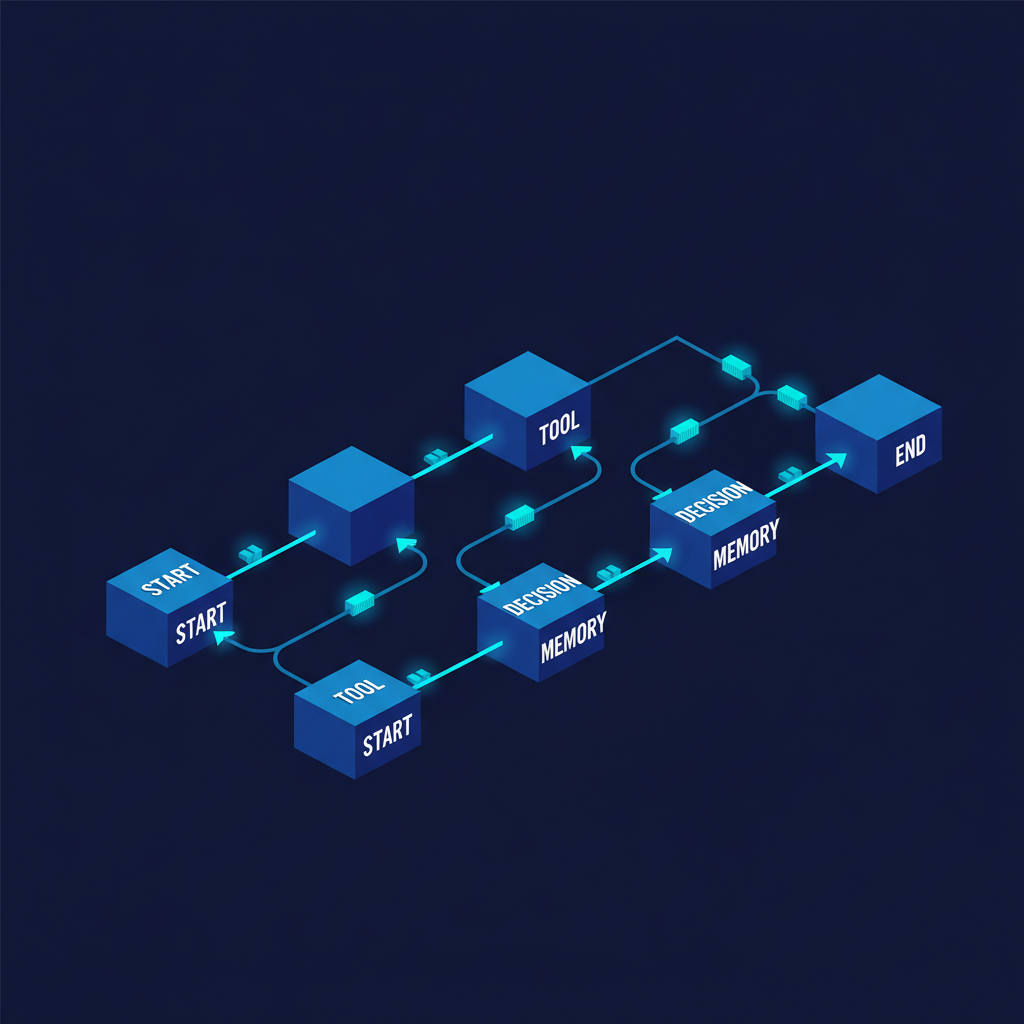

CrewAI organizes agents around four primitives: Agents, Tasks, Tools, and Crews.

An Agent has a role, a goal, a backstory, and a set of tools. The role and backstory are not decorative — they are injected into the system prompt and meaningfully affect the agent's reasoning and output style. A "Senior Data Analyst" with a backstory about rigorous statistical methodology genuinely behaves differently from a "Junior Research Assistant" with a broader, more exploratory brief.

In practice, defining an agent means writing a role title, a clear goal statement, and a backstory paragraph that encodes the persona's priorities, methodology, and standards. A research scientist agent might be characterized by 15 years of ML systems experience, a commitment to empirical results over theoretical claims, and a habit of citing specific papers with quantitative findings. That backstory becomes part of the system prompt, and it genuinely shapes how the agent reasons and what it produces. The agent is also assigned a specific model, a maximum iteration count to prevent runaway loops, and memory to retain context across tool calls.

Tasks define the specific work to be done, the expected output format, and which agent is responsible. A research task specifies not just what to search for but what structure the output must follow — paper title, core technique, quantitative efficiency claims in benchmark context, and key limitations. A synthesis task downstream of the research task references the research task as context, which is how CrewAI passes outputs between tasks — the writer agent automatically receives the researcher's output as part of its own context.

Crew Configuration and Process Types

The Crew combines agents and tasks and specifies the execution process. You assemble the list of agents, the ordered list of tasks, and choose between sequential and hierarchical execution. Rate limiting for API calls can also be configured at the crew level to stay within provider quotas.

Process.sequential executes tasks in order, passing outputs as context. Process.hierarchical introduces a manager agent that plans and delegates tasks dynamically — it is more flexible but substantially more expensive in token usage and more prone to planning errors.

For most production use cases, I recommend starting with Process.sequential. The predictability and debuggability advantages outweigh the flexibility of hierarchical execution until you have a clear reason to need dynamic task allocation.

Where CrewAI Actually Shines

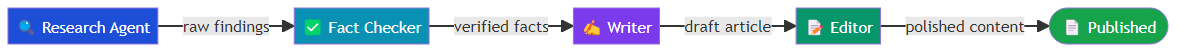

Content and research pipelines. The role-based model maps naturally onto editorial workflows. A research agent gathers sources, a fact-checker validates claims, a writer drafts content, an editor refines it. Each agent's backstory can encode editorial standards, style guides, and quality criteria in natural language. I have seen this pattern produce genuinely high-quality outputs on complex research synthesis tasks.

Rapid prototyping. The time from concept to working prototype with CrewAI is remarkably short. The natural language role and task definitions lower the barrier for non-ML engineers to reason about and modify agent behavior. This is not a trivial advantage — it dramatically expands who can build agent systems.

Long-running background tasks. CrewAI's built-in memory systems (short-term, long-term, entity, and user memory) make it well-suited for tasks that accumulate context over time. The long-term memory backed by ChromaDB persists across sessions, which enables genuinely useful agent continuity.

Tool integration. The crewai_tools package ships with a wide range of pre-built tool integrations (web search, file operations, GitHub, browser automation). The tool interface is clean and composable, and adding custom tools requires minimal boilerplate.

The Failure Modes You Need to Know

Role bleed. Agents do not reliably stay in their lane. A research agent with broad tools and a vague backstory will sometimes start writing synthesis content instead of surfacing raw findings. A writer agent will sometimes conduct its own research rather than working from the provided context. This is a prompting problem, but it surfaces as a collaboration breakdown and it is surprisingly persistent even with carefully written backstories.

Context window bloat in sequential chains. In long sequential pipelines, each task's context includes the full output of all upstream tasks. By the fifth or sixth task in a chain, the context is enormous, expensive, and often redundant. You need to explicitly design context trimming — either by specifying precisely what each task should pass forward or by post-processing outputs before they become context for downstream tasks.

Hierarchical process reliability. Process.hierarchical introduces a manager LLM that makes planning decisions. That manager can make poor decisions — over-specifying task breakdowns, assigning tasks to the wrong agents, or generating malformed delegation structures. The failure mode is often subtle: the crew completes successfully but produces results that do not match the original goal because the manager mis-specified a subtask.

Limited branching and iteration. CrewAI's task model is fundamentally linear (even with hierarchical processes). If a task's output is low quality, there is no built-in mechanism to send it back for revision within the same crew run. You can work around this with agent-level max_iter settings, but you cannot implement complex conditional re-routing the way LangGraph can.

A Practical Decision Framework

Use CrewAI when:

- Your workflow maps naturally onto a team of specialists with distinct roles

- The task sequence is known in advance and primarily linear

- Rapid development and iteration speed are priorities

- You need built-in memory and long-term context across sessions

Use LangGraph instead when:

- You need complex conditional routing or loops

- Failure recovery and checkpointing are critical requirements

- You need fine-grained control over state management

- The workflow involves parallel branches that need to merge

CrewAI is not a toy framework — it has production deployments handling genuine workloads. But it works best when you design your crew with the same care you would use designing an actual team: clear roles, explicit handoffs, and well-defined deliverables at each stage. When people treat it as a magic coordination layer, it fails. When they treat it as a structured collaboration protocol, it delivers.

Explore more from Dr. Jyothi