When Hoffmann et al. published "Training Compute-Optimal Large Language Models" in 2022 — the paper the community quickly dubbed "Chinchilla" — it felt like a corrective slap to the field. The finding was simple and devastating: GPT-3, Gopher, and most other large models at the time were significantly undertrained. They had been scaled up in parameters without scaling the training data proportionally. Chinchilla's central claim was that for a given compute budget, you should train a model roughly half the size on twice the data compared to what was then common practice.

That insight was genuinely transformative. It shifted investment priorities, influenced the design of Llama, Mistral, and almost every serious open model since, and gave practitioners a principled framework for making training decisions. But science moves forward, and the clean narrative of Chinchilla has since been complicated by a wave of follow-up work and practical experience. Here is where we actually stand in early 2026.

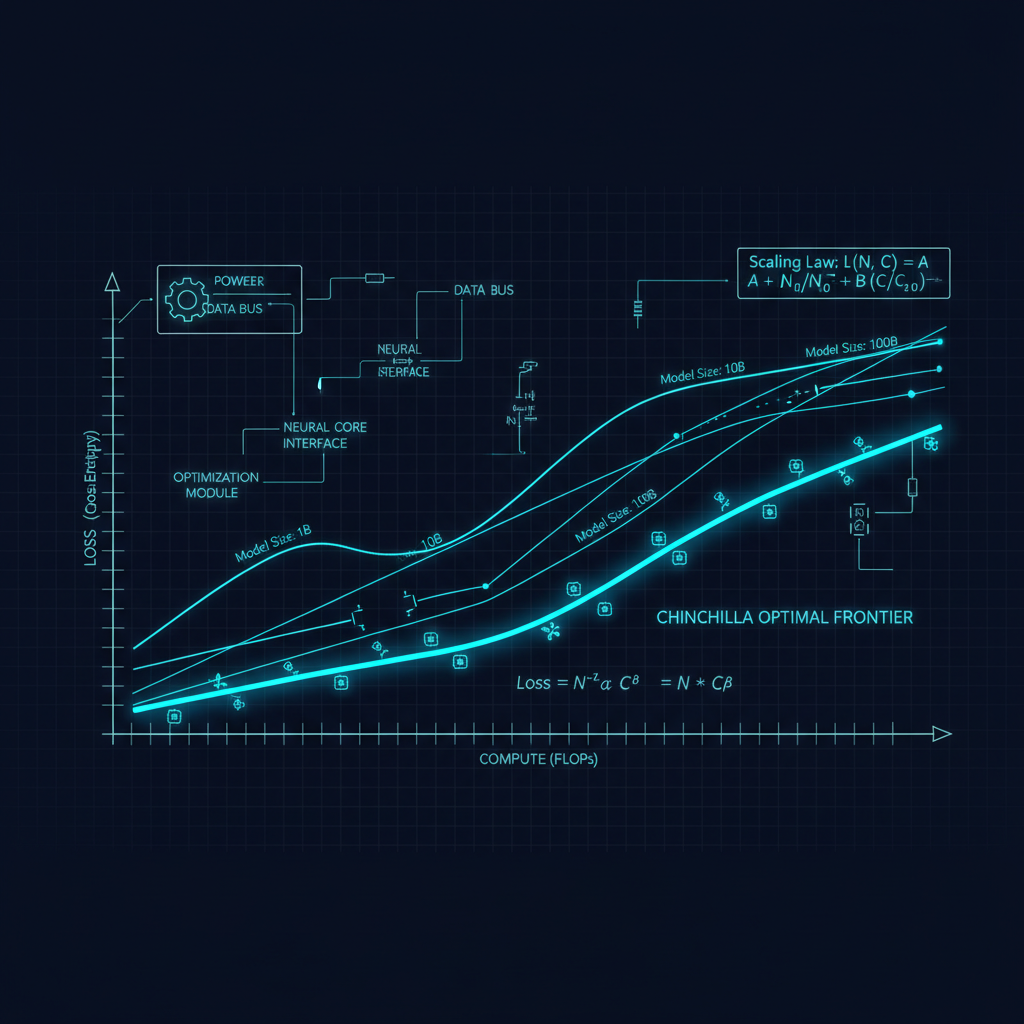

The Original Chinchilla Finding

To understand what has changed, it helps to be precise about what Chinchilla actually claimed. The paper estimated optimal token-to-parameter ratios by training hundreds of models across different sizes and data quantities, then fitting scaling laws to the results. Their conclusion: the optimal number of training tokens scales roughly linearly with model parameters. For a 10B parameter model, the compute-optimal training would use around 200B tokens. This was roughly a 4-5x increase in tokens-per-parameter compared to what GPT-3 had used.

The validation was Chinchilla itself: a 70B parameter model trained on 1.4T tokens that outperformed Gopher (280B parameters) on nearly every benchmark, while using the same compute budget. It was a compelling proof of concept.

What Subsequent Practice Has Revealed

The first complication came quickly: the Chinchilla analysis optimizes for training compute, not for inference cost or deployment economics. This matters enormously in practice. If you are going to serve a model to millions of users, a smaller model is dramatically cheaper per query. It makes sense to "overtrain" a smaller model on more data than Chinchilla recommends if the result is a model that performs comparably to a larger one but costs a fraction as much to run.

Meta's Llama 2 and especially Llama 3 leaned into this insight explicitly. Llama 3 70B was trained on over 15 trillion tokens — roughly 200 tokens per parameter, versus Chinchilla's recommendation of around 20. The result was a 70B model that competes seriously with models two to three times its size. Mistral's models followed a similar philosophy, consistently punching above their weight class.

This "inference-optimal" framing was formalized by several groups. The key insight is that the Chinchilla scaling laws assume you run each model for exactly one forward pass after training. In real deployments, a popular model might run billions of inference calls. Spreading those inference costs over a smaller, more thoroughly trained model changes the math substantially.

The Data Wall and Synthetic Data

A second complication is more troubling: the internet may be running out. Or rather, high-quality text on the public internet is a finite resource, and frontier models are beginning to approach that ceiling. Estimates vary, but credible analyses suggest that the total stock of high-quality English text suitable for pretraining — filtered web crawls, books, scientific papers, code — may be in the range of 10-20 trillion tokens. Models like Llama 3 and the frontier closed models are already training on multi-trillion token datasets that include significant repetition and filtering relaxation.

This has driven serious investment in synthetic data. The intuition is that if a capable model can generate high-quality reasoning chains, code solutions, or explanations, those outputs might serve as training data for the next generation. DeepSeek's R1 paper demonstrated that models trained heavily on synthetic chain-of-thought data can develop remarkably strong reasoning capabilities. OpenAI has been opaque about their data pipeline, but it seems likely that GPT-4o and subsequent models incorporate substantial synthetic data.

The critical question is whether synthetic data can substitute for real human-generated text at scale, or whether it introduces systematic biases and mode collapse. Early evidence suggests it can be highly effective for specific capabilities — especially reasoning and code — but may not be a wholesale replacement for diverse, naturally occurring text.

Scaling Laws for Specific Capabilities

A third revision concerns the granularity of scaling predictions. The original Chinchilla paper reported aggregate benchmark performance, but different capabilities scale differently. Coding ability, mathematical reasoning, factual recall, and instruction following all have distinct scaling curves. Some capabilities appear to scale smoothly and predictably with compute; others appear nearly flat and then exhibit sharp phase transitions — what Anthropic and others have called "emergent abilities."

This heterogeneity makes simple compute-optimal recipes insufficient for practitioners who care about specific capability profiles. A model trained to Chinchilla-optimal specs for general language modeling may be significantly suboptimal for, say, mathematical reasoning, where data quality and problem diversity matter more than aggregate token count.

Architecture Matters More Than Scaling Laws Suggest

Chinchilla's scaling laws were derived from dense transformer models. But the architecture landscape has shifted. Mixture-of-experts (MoE) models like Mixtral and the models underlying GPT-4 and Gemini Ultra operate with a different compute-parameter tradeoff: they have more total parameters but activate only a fraction per forward pass, reducing inference cost while maintaining model capacity. The Chinchilla framework does not straightforwardly apply to MoE architectures, and we are still in the early stages of understanding optimal scaling for these systems.

Similarly, new attention mechanisms — sliding window attention, linear attention approximations, state space models like Mamba — all change the effective compute-per-token relationship in ways that require updated scaling analyses.

What This Means in Practice

For practitioners, the updated picture suggests several things:

First, do not blindly apply the Chinchilla token-to-parameter ratio. If you are training a model that will see heavy inference use, overtraining a smaller model is almost certainly the right call. The 20:1 token-to-parameter ratio is a starting point for academic analysis, not a deployment recipe.

Second, data quality dominates quantity at the frontier. A model trained on 3 trillion carefully filtered, diverse tokens will likely outperform one trained on 10 trillion tokens of indiscriminately collected web text. Invest in data curation.

Third, capability-specific scaling behavior means you need to benchmark throughout training, not just at the end. Understanding where your model is on the scaling curve for the capabilities you care about allows you to make better decisions about when to stop, what data to add, and whether architectural changes are needed.

Fourth, synthetic data is a real lever but requires care. The models generating your synthetic data have their own biases and failure modes; those will propagate into your trained model. Human evaluation of synthetic data quality cannot be skipped.

Looking Forward

The deepest question the scaling law debate leaves open is whether we are approaching fundamental limits or whether there are still qualitative shifts in capability waiting at higher compute scales. The history of AI has repeatedly surprised pessimists who thought scaling would plateau. But the physics of compute, the economics of data centers, and the finiteness of human-generated text all impose real constraints.

My read is that the next major gains will come not from simply scaling further along the existing curves, but from architectural innovation, better data curation, and training strategies that are more targeted to specific capabilities. The Chinchilla paper was a critical correction. The corrections to Chinchilla are themselves being corrected. That is how science is supposed to work.

The field is better for having clear empirical frameworks, even when those frameworks turn out to be incomplete. Understanding why they are incomplete is where the interesting work lives.