DeepSeek-Prover-V2: Reinforcement Learning Cracks Formal Mathematics

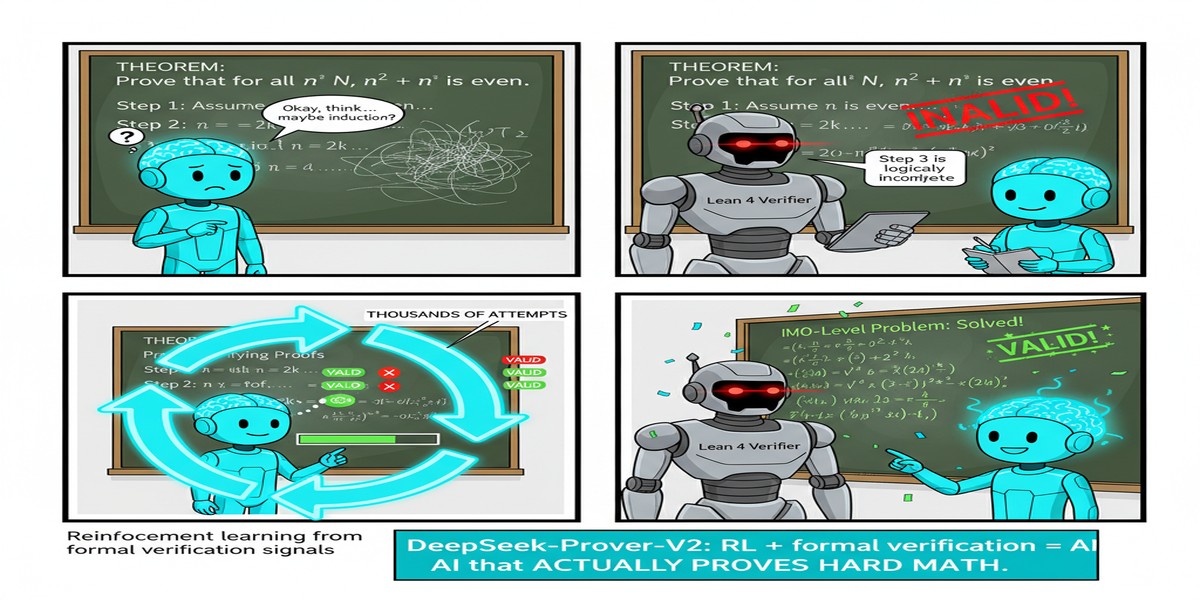

Mathematics has always been the hardest domain for AI systems — not because of the complexity of individual steps, but because of the requirement for complete, verifiable correctness. A proof is either right or wrong. There's no partial credit for getting the intuition right while making a sign error on page 3.

Formal theorem proving takes this a step further: proofs must be verified by a computer proof assistant (Lean, Coq, Isabelle), which means every step must be formally correct in a way that a program can verify. This is brutally hard. The gap between informal mathematical reasoning ("intuitively, if we can show X then Y follows...") and formal proof construction ("therefore, by Lean4.Theorem.7.3, given hypothesis h1...") is enormous.

DeepSeek-Prover-V2 (arXiv: 2504.21801, Apr 2025) closes much of this gap through a recursive subgoal decomposition pipeline combined with reinforcement learning — and the results rewrite what we thought was possible for neural theorem provers.

The Core Problem: Why Formal Proofs Are Hard for LLMs

Standard LLMs can discuss mathematical concepts fluently. They can often sketch proof outlines. They fail at formal theorem proving for a specific reason: proofs must compose correctly. A wrong lemma at step 3 invalidates everything that depends on it, and the proof assistant will catch this immediately.

The failure mode is search depth. Olympiad-level proofs require sequences of hundreds or thousands of verified steps, each building on previous ones. Existing approaches either:

- Work bottom-up (prove small lemmas then compose) — good for structured problems but brittle

- Work top-down (plan the proof then fill in details) — flexible but the top-down plan often requires steps the model can't formalize

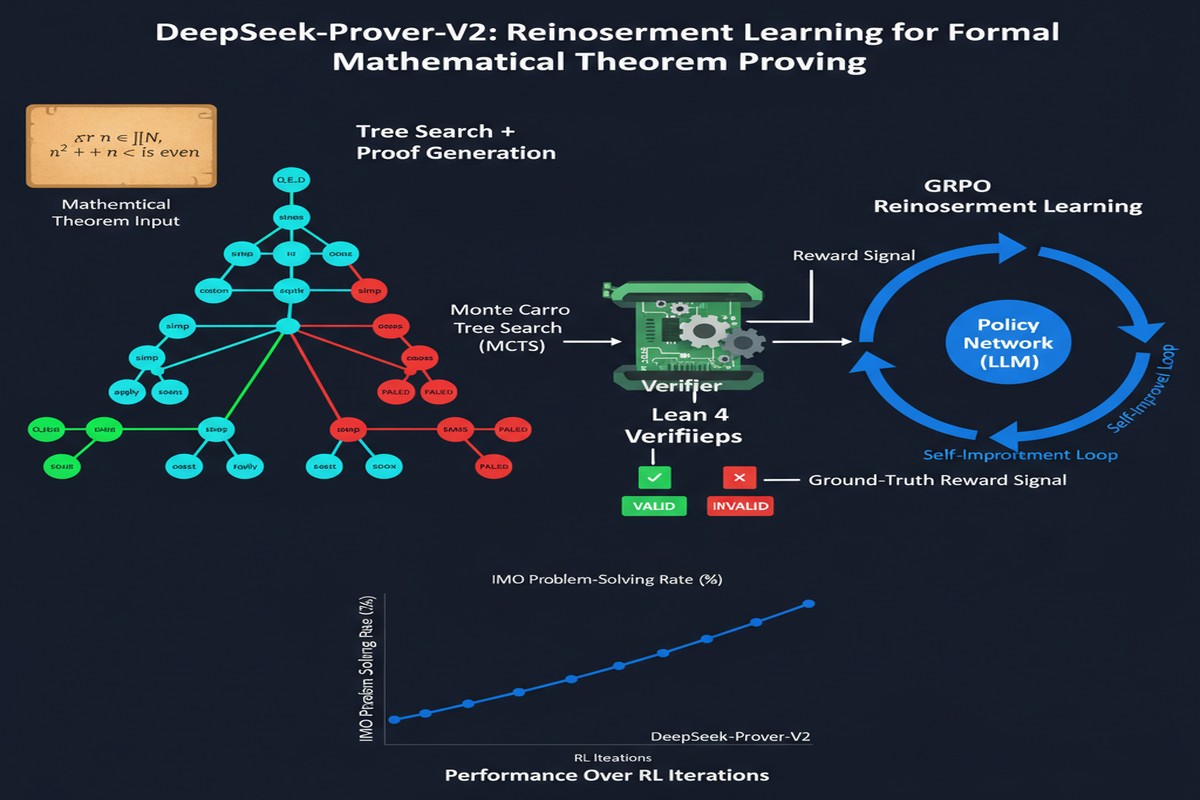

DeepSeek-Prover-V2 introduces a hybrid: recursive subgoal decomposition.

The Recursive Subgoal Decomposition Pipeline

flowchart TD

A[Hard Theorem T] --> B[DeepSeek-V3:\nDecompose into subgoals G1, G2, G3]

B --> C[Subgoal G1]

B --> D[Subgoal G2]

B --> E[Subgoal G3]

C --> F{Prove G1 in Lean4?}

D --> G{Prove G2 in Lean4?}

E --> H{Prove G3 in Lean4?}

F -->|Yes| I[Verified proof of G1]

F -->|No| J[Decompose G1 further]

G -->|Yes| K[Verified proof of G2]

H -->|Yes| L[Verified proof of G3]

I --> M[Compose into proof of T]

K --> M

L --> M

M --> N[Full Lean4 proof of T]

style N fill:#059669,color:#fff

style B fill:#2563eb,color:#fff

style J fill:#d97706,color:#fff

Phase 1 — Decomposition: DeepSeek-V3 (the general-purpose model) breaks a complex theorem into a sequence of subgoals. This is an informal decomposition — it uses mathematical intuition to identify what lemmas need to be proven to establish the main result.

Phase 2 — Formalization: Each subgoal is independently formalized and proven in Lean4. If a subgoal is still too hard for direct proof, it's recursively decomposed further.

Phase 3 — Cold-start training: Successful proof traces (subgoal decomposition + individual Lean4 proofs + composition) are synthesized into chain-of-thought training data. This data initializes DeepSeek-Prover-V2's base training.

Phase 4 — Reinforcement learning: The Lean4 proof checker provides verifiable reward signals (proof correct/incorrect). RL is applied to strengthen the connection between informal reasoning (sketching subgoals) and formal proof construction (Lean4 steps). The verifier is the oracle — no human annotation of correctness needed.

Results: A New State of the Art

| Benchmark | Previous SOTA | DeepSeek-Prover-V2-671B |

|---|---|---|

| MiniF2F-test pass ratio | ~70% | 88.9% |

| PutnamBench (out of 658) | ~35 solved | 49 solved |

MiniF2F is the standard benchmark for formal mathematical reasoning, containing competition-level problems (AMC, AIME, IMO, Putnam). An 88.9% pass ratio is extraordinary — months ago, 70% was considered excellent.

The Putnam benchmark is harder: 658 problems from the Putnam Mathematical Competition, one of the hardest undergraduate-level math competitions. DeepSeek-Prover-V2 solves 49 — a 40% improvement over previous neural systems.

The paper also introduces ProverBench, a new evaluation dataset of 325 problems including 15 formalized AIME 2024-2025 problems. This provides a contamination-resistant benchmark for future work.

Why Reinforcement Learning Works Here

The key insight: formal proof checking provides verifiable rewards with zero ambiguity. A proof either compiles in Lean4 or it doesn't. This is the perfect RL training signal:

- Dense feedback (each proof step succeeds or fails)

- No reward hacking (you can't fake a valid Lean4 proof)

- Scalable data generation (the proof checker runs automatically)

Compare this to RL for language tasks where reward signals require human evaluation or imperfect automated metrics. Formal mathematics is one of the few domains where RL has a perfect, automatic oracle. This is why theorem proving has been a productive RL domain since AlphaGo inspired AlphaProof.

The Hybrid Informal-Formal Architecture

DeepSeek-Prover-V2 uses DeepSeek-V3 for informal decomposition and a specialized smaller model for formal Lean4 proof construction. This division makes sense:

- Informal reasoning (which subgoals to target) is a language generation problem where large models excel

- Formal proof construction (Lean4 syntax, tactic sequences) is a specialized skill where fine-tuned smaller models can specialize

The 671B model handles both, but the architecture ensures the informal planning stage can leverage DeepSeek-V3's mathematical knowledge.

Why This Matters

Formal theorem proving is not just academic. The ability to produce machine-verified proofs has direct applications:

Software verification: Formally proving that software implementations are correct — no bugs by construction. Systems like seL4 (a verified operating system kernel) demonstrate this is possible but require enormous human effort. AI-assisted formal verification could dramatically reduce this cost.

Cryptographic proof verification: Cryptographic protocols rely on mathematical correctness. Automated formal verification of cryptographic proofs could catch subtle errors that have led to catastrophic security failures.

Mathematical research itself: Mathematicians increasingly use proof assistants for complex proofs. AI systems that can handle formal proofs at near-Olympiad level could become genuine research collaborators.

My Take

DeepSeek-Prover-V2 is one of the most technically impressive papers I've read this year. The recursive subgoal decomposition architecture is exactly the right approach — it bridges the informal-formal gap by using informal reasoning where LLMs are strong and formal verification where the proof assistant is authoritative.

The 88.9% MiniF2F result deserves to be celebrated. But I want to push back slightly on the framing: MiniF2F and Putnam are competition problems with known structure. Real mathematical research involves exploring open-ended conjectures where the problem structure itself is unknown. The jump from "solving well-defined competition problems" to "contributing to open mathematical research" is still enormous.

The next frontier: can these systems formalize and verify new mathematical results, not just solve known competition problems? That requires not just proof construction but conjecture generation — a qualitatively different capability.

What DeepSeek-Prover-V2 achieves is remarkable. What remains is harder. Let's not conflate impressive competition performance with mathematical creativity.

Paper: "DeepSeek-Prover-V2: Advancing Formal Mathematical Reasoning via Reinforcement Learning for Subgoal Decomposition", arXiv: 2504.21801, Apr 2025.

Explore more from Dr. Jyothi