When NVIDIA announced Blackwell, the industry responded with the usual mix of breathless excitement and marketing-fueled skepticism. Numbers like "30× faster inference than H100" sound great on a slide deck, but they tell you almost nothing about what the hardware actually does in the real world. That's why I was genuinely glad to see a rigorous academic paper drop in December 2025 — Microbenchmarking NVIDIA's Blackwell Architecture: An In-Depth Architectural Analysis (arXiv:2512.02189) by Aaron Jarmusch and colleagues. This is the kind of work the field needs: not benchmarks designed to make the vendor look good, but systematic measurements designed to answer the question every serious engineer is asking — what changed, and by how much?

The Paper in Brief

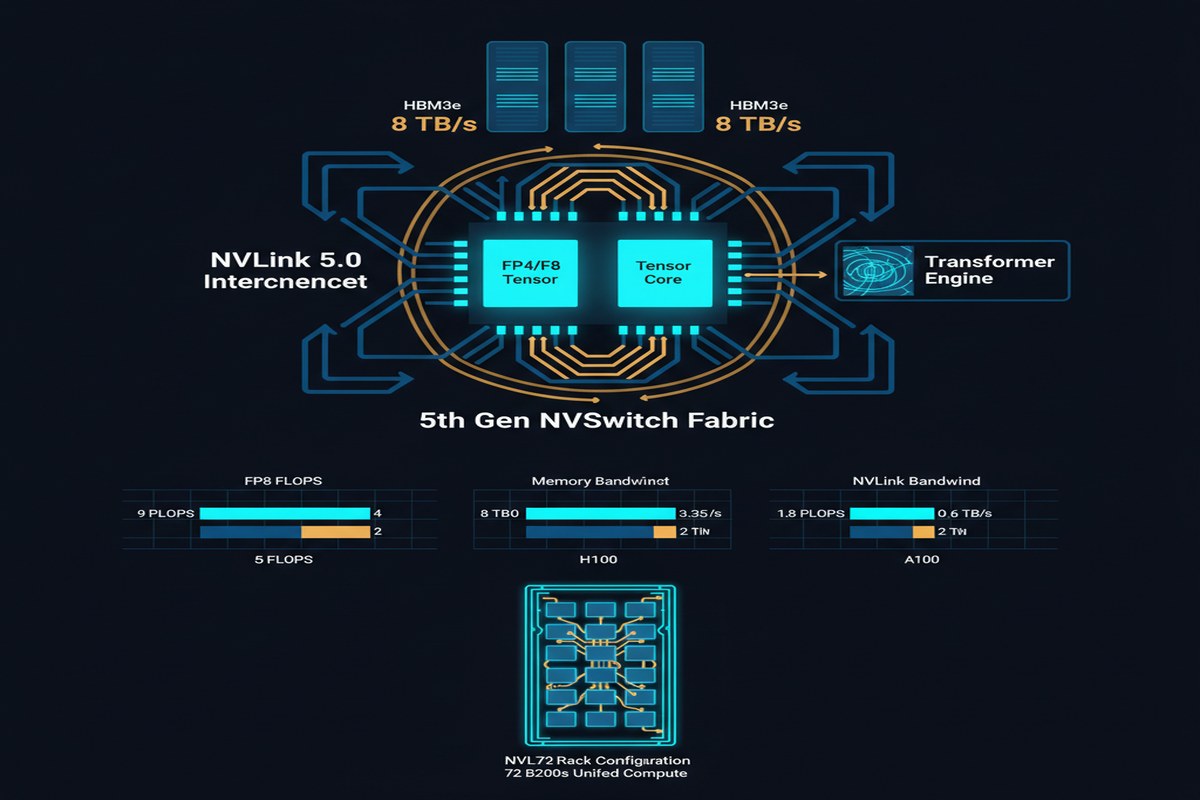

The paper is a comprehensive microbenchmark characterization of the NVIDIA B200 GPU. The authors focus on three architectural innovations that distinguish Blackwell from Hopper (H100/H200):

- Fifth-generation Tensor Cores with new

tcgen05PTX instructions - Tensor Memory (TMEM) — a new dedicated on-chip memory tier

- A Hardware Decompression Engine (DE) for accelerating sparse and quantized workloads

Their methodology is rigorous: they write custom PTX kernels to isolate individual hardware behaviors, measure performance across a range of problem sizes and configurations, and cross-validate with known architectural parameters where available. This is benchmarking done right.

What's New in Blackwell and Why It Matters

Fifth-Generation Tensor Cores and TMEM

The H100 introduced HBM3 and its 4th-gen Tensor Cores with FP8 precision. Blackwell goes further. The new tcgen05 PTX instruction family enables direct loading of matrix operands from TMEM — a new, dedicated register file-like memory sitting between shared memory (SMEM) and registers.

Why does this matter? In prior architectures, matrix operands for GEMM operations had to be staged through shared memory, creating bottlenecks when you're running large matrix multiplications back-to-back. TMEM eliminates this indirection for matrix-heavy workloads. The paper quantifies this: TMEM-driven GEMM achieves 1.85× ResNet-50 and 1.55× GPT-1.3B mixed-precision training throughput compared to H200 baselines.

That's not a marginal gain. 1.55× on GPT-1.3B training is meaningful if you're actually doing training work. The asymmetry — bigger gains on ResNet than GPT — is also informative: it tells you TMEM's benefits scale with compute intensity relative to memory bandwidth. Transformer workloads are memory-hungry, so they benefit less from faster compute than pure convolutions.

graph TD

A[Matrix Data in HBM] --> B[L2 Cache]

B --> C[Shared Memory SMEM]

C --> D[Registers]

D --> E[4th Gen Tensor Cores H100]

A --> F[L2 Cache]

F --> G[Tensor Memory TMEM - NEW]

G --> H[5th Gen Tensor Cores B200]

H --> I[Result]

style G fill:#00d4ff,color:#000

style H fill:#76b900,color:#fff

style E fill:#888,color:#fff

The Hardware Decompression Engine

This is the architectural choice that I think will have the biggest long-term impact, even if it generates the least hype right now. The B200 includes a dedicated silicon block that decompresses structured-sparse and quantized weight representations directly on the GPU — before data hits the Tensor Cores.

The implications: if you're doing INT4 or INT8 quantized inference (and if you're deploying LLMs at scale, you should be), the decompression overhead that previously ate into your compute budget is now handled by dedicated hardware that runs in parallel with the Tensor Cores. The paper shows 32% better energy efficiency than H200 on mixed-precision workloads, and a substantial fraction of that comes from the DE enabling more aggressive quantization without the software decompression tax.

flowchart LR

A[Compressed Weights\nINT4/FP8 in HBM] --> B{Hardware\nDecompression\nEngine}

B --> C[Decompressed\nWeights]

C --> D[5th Gen Tensor Cores]

D --> E[Result]

F[Activation Data] --> D

style B fill:#ff6b35,color:#fff

style D fill:#76b900,color:#fff

Energy Efficiency: The Metric That Actually Matters

32% better energy efficiency over H200 is the headline number, and it's worth unpacking. H200 was already more efficient than H100, so this is a compounding gain. More importantly, it comes from a combination of factors: TMEM reducing memory traffic, the DE offloading decompression, and architectural improvements in the Tensor Core scheduling pipeline.

For anyone running inference at scale in 2026, energy cost is no longer a secondary concern. It's often the primary cost driver after hardware capital. A 32% efficiency gain translates directly to lower operational costs and — if you care about this sort of thing — lower carbon emissions per inference.

Why This Matters

The Blackwell architecture makes a clear architectural bet: specialized silicon wins. TMEM is specialized silicon for matrix operand staging. The Decompression Engine is specialized silicon for quantization-friendly inference. This is the opposite of the "make the general-purpose GPU faster" approach that dominated GPU evolution for a decade.

This matters because it signals where NVIDIA sees the future: not in ever-larger monolithic CUDA cores, but in heterogeneous compute blocks where different tasks are handled by purpose-built hardware. This is the same philosophy Intel bet on with their Gaudi line and Google bet on with TPUs — NVIDIA is just arriving at the party from a different direction.

The dual-chip design of the GB200 (two B200 dies connected via NVLink Chip-to-Chip) also deserves mention. Connecting dies at this bandwidth crosses a threshold where the boundary between "one chip" and "two chips" becomes architecturally meaningless. This is wafer-scale thinking without wafer-scale manufacturing risk.

My Take

I'll be direct: Blackwell is impressive, and this paper confirms it empirically rather than through vendor benchmarks. But I want to be careful about two things.

First, 1.55× training speedup on GPT-1.3B is real, but don't extrapolate blindly. The gains are heavily workload-dependent. Memory-bandwidth-bound workloads — long-context inference, KV cache-heavy serving — will see smaller gains than the headline numbers suggest. If your production workload is 70B-parameter decoding with long contexts, you need to benchmark your actual workload, not trust marketing slides or even academic papers that benchmark training.

Second, the Decompression Engine is the feature I'm most excited about, and it's the least discussed one. The industry is moving toward INT4 and sub-INT4 quantization for inference. Hardware-assisted decompression means Blackwell gets better as quantization techniques improve, without requiring a new chip. That's a smart architectural decision that will compound over time.

One criticism I'll lodge: the paper focuses heavily on training metrics. Given that inference is where most AI compute actually lives in production, I'd love to see equally rigorous inference-specific benchmarks — prefill throughput, decode latency, KV cache memory efficiency — on realistic LLM workloads. The academic community is still catching up to the reality that inference is the dominant compute regime.

NVIDIA has built something genuinely new with Blackwell. The fifth-generation Tensor Cores and TMEM represent real architectural progress, not just a die shrink. The Decompression Engine is a forward-looking bet on the quantization-dominated inference future. Whether it's worth the price premium over H100/H200 for your specific workload is a question this paper helps you answer — if you read it carefully.

References

- Jarmusch, A., et al. (2025). Microbenchmarking NVIDIA's Blackwell Architecture: An in-depth Architectural Analysis. arXiv:2512.02189.

- NVIDIA. (2024). NVIDIA Blackwell Architecture Technical Overview. NVIDIA Technical Reports.