Every time I read about neuromorphic computing, I notice a gap between the research claims and the engineering reality. Papers report impressive numbers on curated benchmarks, then note in the fine print that the hardware was running in laboratory conditions with carefully tuned inputs. Deploying real applications — not benchmarks, not toy problems, but actual use cases that matter to product teams — is where neuromorphic research has historically struggled.

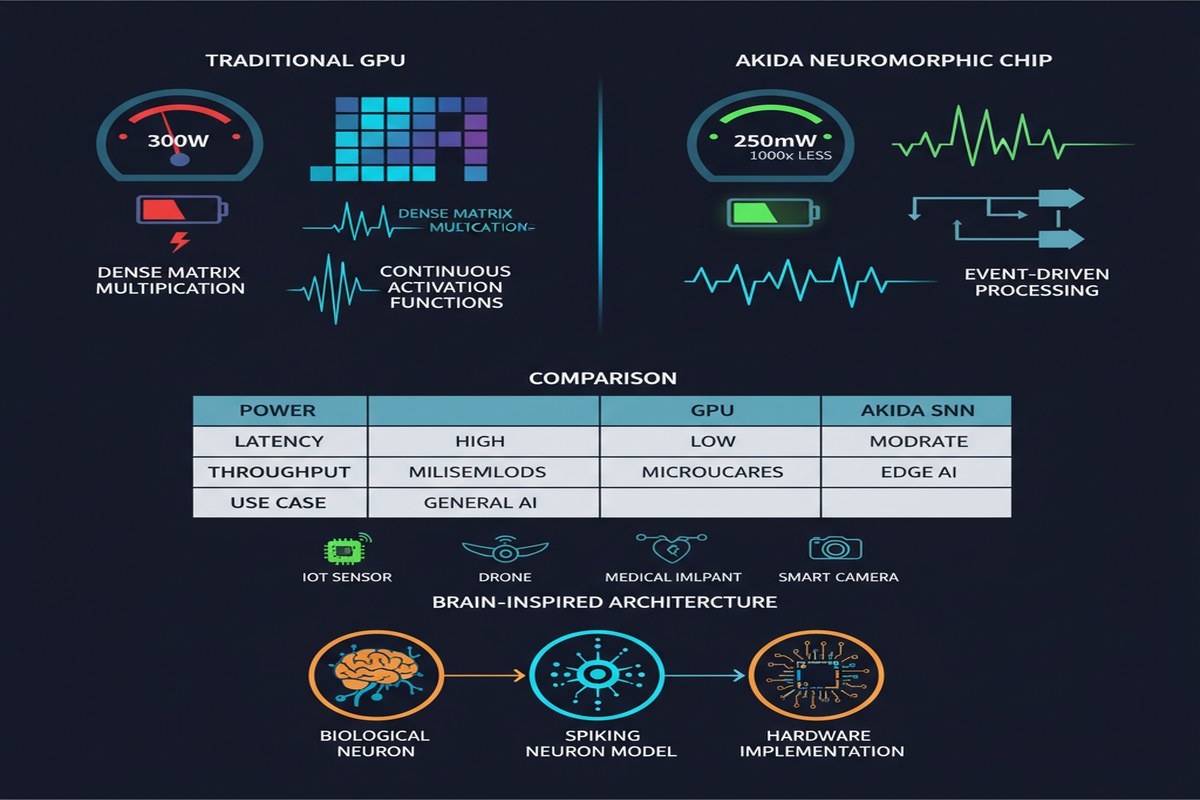

This paper from the University of Luxembourg (arXiv:2504.00957) is different. It is unabashedly applied. The researchers tackle three real-world edge AI tasks — image classification, video object detection, and voice keyword recognition — on BrainChip's Akida AKD1000 neuromorphic processor, under a hard 250 mW power constraint. They report specific latency numbers. They describe the methodology in enough detail to reproduce. This is what engineering research looks like, and the field needs more of it.

BrainChip Akida: The Other Neuromorphic Player

Most of the neuromorphic research I cover focuses on Intel Loihi 2. This is understandable — Intel has invested heavily in research partnerships and the Lava programming framework. But BrainChip's Akida is arguably the more commercially mature platform, and it deserves attention.

The AKD1000 is a commercially available chip — not a research prototype. It is in production, shipping to customers, and available in development boards that you can buy today. BrainChip's business model is licensing the Akida IP to system integrators, which means the chip appears in automotive, industrial, and IoT contexts where other neuromorphic platforms are still academic exercises.

The AKD1000 architecture is built around what BrainChip calls "Neural Processing Units" (NPUs) — silicon implementations of spiking neuron layers that support on-chip learning. The on-chip learning capability is important: unlike most edge inference accelerators, Akida can adapt to new data without sending information back to the cloud. The chip's computation model is event-driven: neurons only compute when they receive spikes, which translates directly to power savings when inputs are sparse.

flowchart TD

subgraph AKD1000["BrainChip AKD1000 Architecture"]

direction TB

subgraph NPU1["NPU Cluster 1\nConvolutional Spiking Layers"]

N1[Input Spike\nEncoding]

N2[Spiking Conv\nLayer 1]

N3[Spiking Conv\nLayer 2]

N1 --> N2 --> N3

end

subgraph NPU2["NPU Cluster 2\nFully Connected Layers"]

N4[Spiking FC\nLayer 1]

N5[Spiking FC\nLayer 2]

N4 --> N5

end

subgraph Learning["On-Chip Learning Engine"]

L1[Local STDP\nWeight Updates]

L2[No Backprop\nNeeded]

L1 --> L2

end

subgraph Memory["On-Chip SRAM\nWeight Storage"]

M1[Sparse Weight\nRepresentation]

end

NPU1 --> NPU2

NPU2 --> Learning

Memory <--> NPU1

Memory <--> NPU2

end

Input[Sensor Input\nCamera / Mic / Radar] --> AKD1000

AKD1000 --> Output[Classification\nDetection\nKeyword]

style L1 fill:#1a73e8,color:#fff

style Input fill:#34a853,color:#fff

style Output fill:#fbbc04,color:#000

The 250 mW power budget in this paper is not arbitrary. It corresponds roughly to the power envelope of an ultra-low-power embedded system — a smartphone processor in idle mode, a mid-range microcontroller at full load, or the available power budget for a USB-powered IoT device. Working within this constraint means the results are directly applicable to real product decisions.

Task 1: Image Classification

The first application is image classification on standard benchmarks (CIFAR-10 and a subset of ImageNet). The methodology involves converting a pre-trained conventional CNN to a spiking representation using BrainChip's MetaTF framework (a TensorFlow extension that handles the ANN-to-SNN conversion pipeline).

The key result: inference latency under 50 ms for image classification tasks, within the 250 mW power budget.

To put 50 ms in context: human visual recognition latency is approximately 100–200 ms for complex scene understanding, and roughly 20 ms for basic object presence detection. Under 50 ms is fast enough for real-time visual feedback in interactive applications. It is also fast enough to process camera feeds at 20+ frames per second if the classification task is simple.

The accuracy on CIFAR-10 is within 1–2% of the equivalent ANN baseline — consistent with the broader SNN literature I reviewed in the Aribe Jr. survey. The gap is not zero, but it is within acceptable range for most edge deployment scenarios where the alternative is no classification at all (because the device cannot afford the power budget for GPU inference).

Task 2: Video Object Detection

Video object detection is a harder problem than image classification. It requires maintaining temporal consistency across frames, handling objects at multiple scales, and running at sufficient frame rate to be useful. The YOLO family of detectors has dominated this space on GPUs; adapting them for neuromorphic hardware is non-trivial.

The paper describes a modified detection pipeline that exploits the temporal redundancy of video: since most pixels do not change between consecutive frames, an event-driven processor can focus computation on the changed regions only. This is analogous to how event cameras work — they fire only when pixel intensity changes, rather than capturing full frames at fixed intervals.

The result: inference latency under 200 ms for video object detection, within 250 mW.

200 ms is approximately 5 frames per second, which is on the lower end of usable for smooth video applications but acceptable for surveillance, industrial inspection, or traffic monitoring where objects move slowly relative to the frame rate. More importantly, the event-driven approach means the latency is not fixed — in scenes with little motion, latency drops substantially because there is less to process.

sequenceDiagram

participant C as Camera Feed

participant E as Event Encoder\n(Delta Frame)

participant A as Akida NPU\nObject Detector

participant T as Temporal\nConsistency Module

participant O as Detection Output

C->>E: Full frame at t=0\n(baseline)

C->>E: Frame at t+1

E->>E: Compute pixel delta\n(changed regions only)

E->>A: Sparse spike events\n(changed pixels → spikes)

Note over A: Event-driven compute\nOnly active for changed regions

A->>T: Bounding box proposals\ncurrent frame

T->>T: Merge with previous\ndetections for stability

T->>O: Stable detections\n< 200ms latency

O->>C: Ready for next frame

Task 3: Voice Keyword Recognition

Keyword recognition is arguably the killer app for neuromorphic edge computing. The requirements are almost perfectly aligned with neuromorphic capabilities: always-on, ultra-low-power, latency-sensitive, and naturally event-based (audio is a time series).

The result here is the most impressive of the three: inference latency under 1 ms for keyword recognition, within 250 mW.

Under 1 millisecond. This is not a rounding error — it is a fundamentally different response time than what GPU-based keyword recognition achieves. Standard keyword spotting on edge CPUs runs at 10–50 ms. On Akida, it runs at sub-millisecond. For a voice assistant, this means the system responds to "Hey, activate" before the human has consciously registered that they said it.

The audio processing pipeline converts incoming audio into a spike representation using a cochlear model (a simulated inner ear that generates spike trains analogous to what auditory nerve fibers produce). This spike representation is then classified by a spiking classifier on-chip. The end-to-end pipeline — microphone to keyword decision — runs entirely on-chip with no external memory access required.

The Design Methodology

Beyond the specific results, the paper's real contribution is a design methodology for deploying SNNs on the Akida platform. This is the unsexy but important part.

The methodology has four stages:

Task profiling: Determine the latency and accuracy requirements for the specific application. Understand which features of the input are most discriminative.

Network architecture selection: Choose a network architecture that maps efficiently onto Akida's NPU layout. Avoid layers that require operations Akida does not support natively (attention mechanisms, layer normalization, etc.).

ANN-to-SNN conversion: Use MetaTF to convert a pre-trained ANN to spiking representation, then fine-tune on the target dataset. The conversion process introduces accuracy loss; the fine-tuning recovers most of it.

On-chip profiling and optimization: Deploy to Akida, measure actual latency and power, iterate on the architecture until the constraints are met.

This is a disciplined engineering process, not a research workflow. The fact that it works — and produces deployable results across three different task types — validates the Akida platform as a production-ready neuromorphic option.

Why This Matters

The framing of this paper around the 250 mW power budget is what makes it matter. Most neuromorphic research implicitly assumes that power efficiency is good but not critical — that it is a nice-to-have feature that might eventually tip competitive decisions. This paper assumes the opposite: 250 mW is a hard constraint, and anything that does not fit within it is not a solution.

This framing is correct for the largest potential market. Billions of edge devices exist with hard power budgets: wearables, hearing aids, industrial sensors, automotive cockpits, smart home devices. These devices do not need ImageNet accuracy. They need reliable classification or detection of a specific, well-defined set of inputs, at ultra-low power, with acceptable latency, over battery-powered operation that lasts months or years.

The Akida results — under 50 ms for image, under 200 ms for video, under 1 ms for audio — are all within acceptable ranges for these applications. The 250 mW power budget is met. The case for deployment is not theoretical.

BrainChip occupies an interesting position in the neuromorphic landscape. Intel has better research partnerships and more powerful hardware. IBM has deeper theoretical foundations. But BrainChip has a shipping product with a real SDK and a business model that involves actual customers paying actual money. In the hardware business, that matters more than research papers.

My Take

I have two strong opinions about this paper and the Akida platform more broadly.

First: the ANN-to-SNN conversion approach is a pragmatic shortcut that the field needs to move beyond. The paper uses MetaTF to convert pre-trained ANNs to spiking representations. This works — the results prove it works — but it is fundamentally limited. You are taking a model designed for dense matrix multiplication and approximating it with spikes. The accuracy loss is manageable (1–2%), but you are leaving performance on the table. Networks designed from scratch as SNNs, with architectures that exploit temporal structure and synaptic delays (as in the Mészáros et al. paper I reviewed), will consistently outperform converted ANNs on the tasks where SNNs have a natural advantage. The field needs both approaches — conversion for rapid deployment of existing models, and native SNN design for maximum efficiency — but researchers should not mistake the conversion approach for the long-term solution.

Second: the sub-1ms keyword recognition result deserves more attention than it gets. In a field that tends to lead with energy efficiency numbers, the latency story for audio is underappreciated. Sub-millisecond keyword recognition means that neuromorphic audio processing is not just more efficient than GPU-based processing — it is faster. This opens application spaces that GPU systems cannot enter regardless of efficiency: real-time hearing augmentation, latency-critical industrial control, and any application where the response must arrive before the human perceives the stimulus. These are not niche markets. They are genuinely large.

My overall view on Akida vs. Loihi 2: these platforms are not competing for the same customers. Loihi 2 is a research platform with extraordinary capabilities (on-chip learning, complex neuron models, large network sizes) that requires Intel partnership to access. Akida is a commercial product with a documented SDK, available development kits, and a business model. If you are a research group exploring the frontier of neuromorphic capabilities, you want Loihi 2. If you are a product team that needs to ship something in the next 18 months that runs within a power budget, you want Akida.

The University of Luxembourg paper demonstrates that Akida can ship real products. That is not a trivial result. In a field full of impressive demonstrations that never leave the lab, a paper that shows end-to-end deployment across three real application types is worth taking seriously.

250 mW is all you get. The paper shows it is enough.

Paper: University of Luxembourg, "Enabling Efficient Processing of Spiking Neural Networks with On-Chip Learning on Commodity Neuromorphic Processors for Edge AI Systems," arXiv:2504.00957, April 2025.