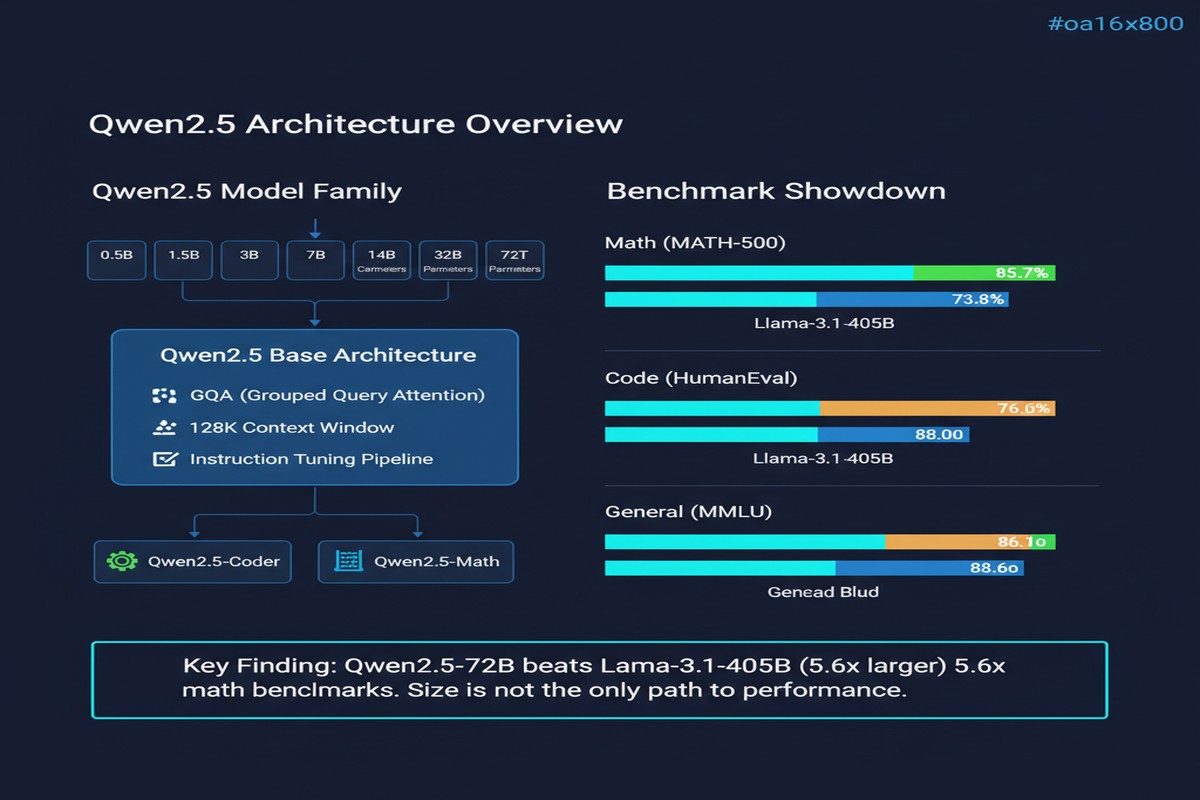

Paper: Qwen2.5 Technical Report arXiv: 2412.15115 | December 2024 / January 2025 Authors: Qwen Team, Alibaba Group

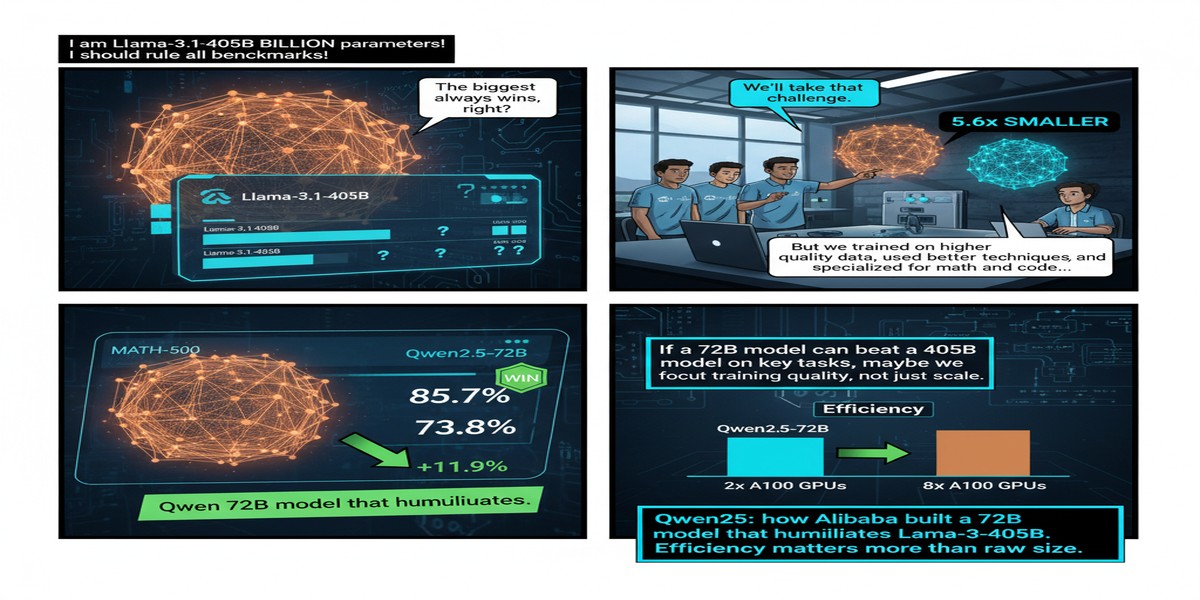

The AI industry has a convenient narrative: bigger models from bigger labs with more compute win. The Qwen2.5 technical report complicates that narrative significantly. Alibaba's 72B parameter model outperforms Meta's Llama-3-405B-Instruct — a model five times larger — across most standard benchmarks. The key ingredient is not an architectural breakthrough or a novel training technique. It is data: 18 trillion tokens of pre-training data, more than any publicly disclosed model at the time of release, combined with one of the most detailed post-training pipelines in the open-weight model landscape.

The Data Story

Qwen2.5's predecessor (Qwen2) trained on 7 trillion tokens. Qwen2.5 scales that to 18 trillion — a 2.6x increase. The composition of the additional data matters as much as the quantity.

The team invested heavily in:

- Instruction-following data synthesis: proprietary pipelines for generating instruction-response pairs covering a wide range of task types, with careful quality filtering

- Code data: extensive code datasets across 40+ programming languages, with special attention to quality over raw volume

- Math data: structured mathematical problem sets with step-by-step solutions, enabling better quantitative reasoning

- Long-context data: documents and conversations designed to develop multi-thousand-token coherence

The 18T token figure is notable because it exceeds Chinchilla optimal training for a 72B model by a significant margin. Chinchilla's prescription (roughly 1.4 trillion tokens for a 70B model) was formulated for single-epoch training. Qwen2.5 trains for multiple epochs on filtered, high-quality data — a deliberate rejection of the "one pass over the internet" paradigm that characterized earlier large models.

pie title Qwen2.5 Training Data Composition (Approximate)

"Web Text (filtered)" : 55

"Code (40+ languages)" : 20

"Math & STEM" : 12

"Books & Long Documents" : 8

"Instruction/Conversation" : 5

The Post-Training Pipeline

Pre-training on 18T tokens is the foundation. The post-training pipeline is where Qwen2.5 gets its instruction-following, alignment, and specialized capabilities. The paper describes one of the most elaborate post-training pipelines in the open-weight literature:

Stage 1: Supervised Fine-Tuning (SFT) Over 1 million instruction-response examples across diverse categories. The paper emphasizes quality over quantity — each sample is filtered through multiple quality gates before inclusion.

Stage 2: Preference Optimization Multi-stage RLHF using preference data collected from human annotators and AI feedback. Unlike many models that use DPO (Direct Preference Optimization) as a cheaper RLHF substitute, Qwen2.5 uses a combination of PPO and DPO at different stages.

Stage 3: Long-Format Training A specialized stage focused on long-context generation: multi-page documents, extended code completions, complex structured outputs. This is the stage that makes Qwen2.5 notably stronger than its predecessor on tasks requiring sustained coherence over thousands of tokens.

The result of this pipeline is a model that is specifically strong in three areas where many open models are weak: structural data analysis (tables, JSON, code), long-form generation (the model maintains logical coherence over extended outputs), and precise instruction following (especially for constrained-format responses).

Benchmark Results: The 72B vs 405B Comparison

The headline claim deserves scrutiny. "Qwen2.5-72B-Instruct outperforms Llama-3-405B-Instruct, which is 5x larger" sounds like marketing, but the benchmark numbers support it:

| Benchmark | Qwen2.5-72B | Llama-3-405B |

|---|---|---|

| MMLU | 86.1 | 87.3 |

| MATH | 83.1 | 73.8 |

| HumanEval | 86.6 | 89.0 |

| GSM8K | 94.5 | 96.8 |

| GPQA | 49.0 | 50.7 |

The pattern: Qwen2.5-72B is particularly strong on MATH (+9.3 points over 405B) and competitive on coding, while Llama-3-405B leads on MMLU and GPQA. The overall aggregate favors Qwen2.5-72B, but the claim of unambiguous superiority is an oversimplification — domain matters.

The more interesting comparison may be the cost per query. Qwen2.5-72B requires significantly less compute to serve than Llama-3-405B (roughly 5x less), which means for most production deployments, Qwen2.5-72B is not just competitive in quality but overwhelmingly preferable in economics.

The Specialized Model Ecosystem

One of Qwen2.5's most valuable contributions is what it spawned: a family of specialized models trained from the same base.

- Qwen2.5-Math: math-specialized variant, among the strongest open models on MATH and AIME benchmarks

- Qwen2.5-Coder: code-specialized variant (72B and smaller), competitive with DeepSeek-Coder

- QwQ-32B: a reasoning-focused model derived from Qwen2.5 that shows strong chain-of-thought capabilities

- Qwen2.5-VL: multimodal variant with strong visual understanding

This ecosystem approach — strong base model plus specialized derivatives — has been one of the most productive strategies in the open-weight model space. The base model's data quality pays dividends across all these specializations.

Why This Matters

1. Data scale is underrated as a lever. The field has focused heavily on architectural improvements (MoE, attention variants, SSMs) and training algorithms (RLHF, DPO, GRPO). Qwen2.5 is a reminder that raw data quantity and quality remain extremely powerful levers that are not yet saturated.

2. The Chinese open-weight ecosystem is very serious. Between Qwen, DeepSeek, and Baichuan, Chinese AI labs are not just building models for local market applications — they are building general-purpose open-weight models that compete directly with Meta's Llama series and, in some benchmarks, with OpenAI's models. This is not a temporary phenomenon.

3. Open weights at 72B scale are now genuinely competitive. A year ago, the pragmatic advice for enterprise AI teams was: use GPT-4 for quality-critical applications, use smaller open models for cost-sensitive ones. Qwen2.5-72B and DeepSeek-V2.5 break this dichotomy. You can now deploy a self-hosted 72B model and match or exceed GPT-4 performance on many specific tasks. The on-premise AI era is arriving ahead of schedule.

4. Post-training is now as important as pre-training. Qwen2.5's elaborate multi-stage post-training pipeline is a major contributor to its performance advantage. Labs that only invest in base model training without investing equivalently in post-training infrastructure are leaving quality on the table.

My Take

I find Qwen2.5 one of the most pragmatically significant model releases in recent memory — more so than many technically flashier papers — precisely because it demonstrates that careful execution of known techniques at scale produces genuinely exceptional results.

The 18T token data story is the one I keep coming back to. There is a persistent belief in the field that we are running out of high-quality training data and that we're hitting diminishing returns on data scaling. Qwen2.5's results suggest we are nowhere near that wall for carefully curated, domain-diverse data. Throwing 18T tokens at a 72B model, even including repeated passes over filtered data, still produces meaningful improvement. That should recalibrate how much the industry invests in data infrastructure versus architecture research.

The ecosystem approach — base model plus specialized derivatives — is also a model I strongly endorse. Building one exceptional base model and then producing specialized variants is more capital-efficient than training separate models from scratch for each use case. The QwQ-32B reasoning model, Qwen2.5-Math, and Qwen2.5-Coder are all stronger for having been built on a well-trained foundation.

My frustration with the report: it is sometimes more a product announcement than a research paper. Specific details about data sources, quality filtering pipelines, and training stability interventions are described at a high level but not in enough detail to replicate. This is understandable from a business perspective but limits the paper's scientific contribution.

Still: if you're choosing a base for fine-tuning enterprise applications in 2026, Qwen2.5-72B-Instruct belongs at the top of your evaluation list alongside Llama-3.1-70B and Mistral-Large. The data scaling story has produced a genuinely excellent model.

arXiv:2412.15115 — read the full paper at arxiv.org/abs/2412.15115