Paper: LLMOrbit: A Circular Taxonomy of Large Language Models — From Scaling Walls to Agentic AI Systems arXiv: 2601.14053 | January 2026

The LLM field generates dozens of significant papers every week. For practitioners building systems, this pace has become genuinely difficult to navigate. What architectural choices have proven durable? Which training techniques have been validated at scale? What's the state of the art on efficiency versus quality tradeoffs? Which agentic patterns are robust and which are research demonstrations?

LLMOrbit attempts to answer these questions with a systematic, circular taxonomy that organizes the LLM landscape across architecture, training methodology, inference optimization, and agentic applications. Published in January 2026, it covers the current state of a field that has changed more in the past 24 months than in the previous 7 years of transformer history.

This is a survey paper, not a research contribution in the traditional sense. But a good survey at the right moment is enormously valuable, and LLMOrbit is timely and comprehensive.

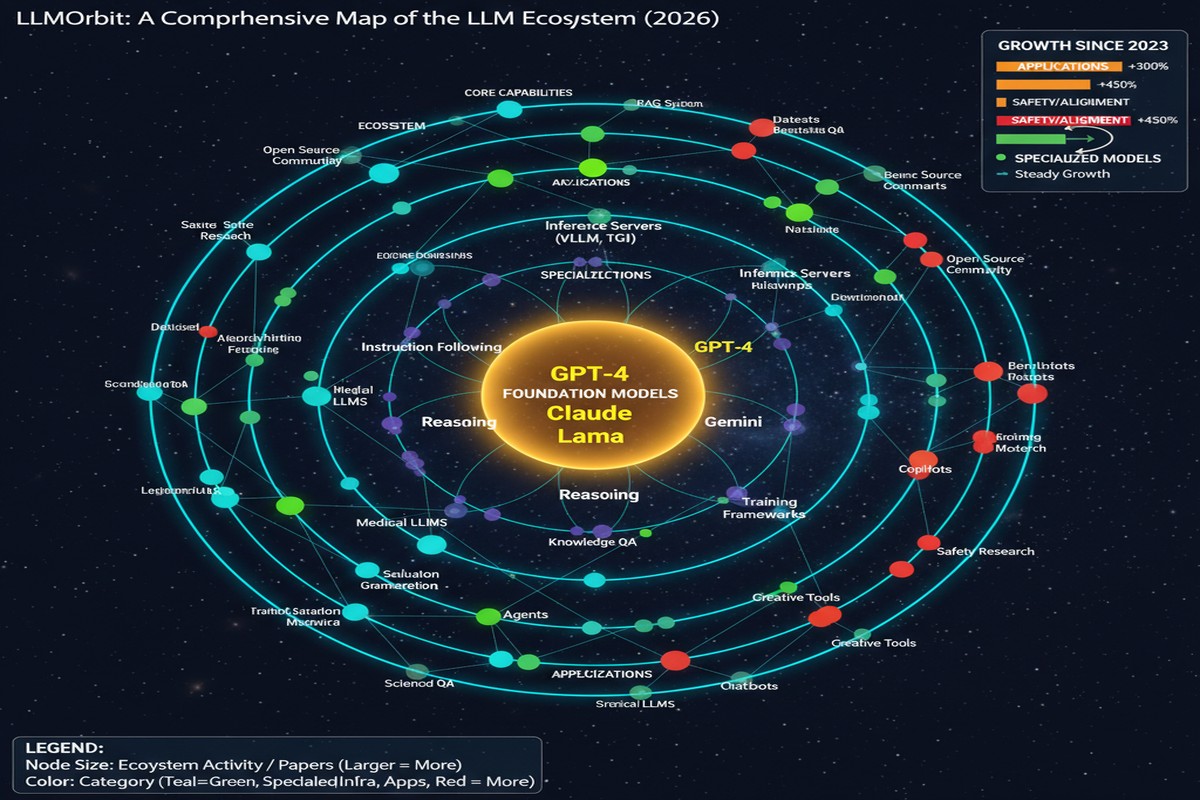

The Circular Taxonomy Structure

The "circular" framing is deliberate: the authors argue that LLM capability advances feed back into enabling the next wave of advances in a cycle. Better architectures enable more efficient training; more efficient training enables larger scale; larger scale enables capabilities that drive demand for better architectures.

flowchart TD

A[Scaling Foundations] --> B[Architecture Evolution]

B --> C[Training Methodology]

C --> D[Inference Optimization]

D --> E[Agentic Applications]

E --> A

A --> A1[Scaling laws, data, compute]

B --> B1[Attention variants, MoE, SSM hybrids]

C --> C1[RLHF, DPO, GRPO, RLVR]

D --> D1[Quantization, KV cache, speculative decoding]

E --> E1[Tool use, multi-agent, RAG, planning]

The circular structure captures the feedback loops: agentic applications generate new training data (from agent trajectories), which feeds back into training methodology development. Inference optimization enables more capable models to be deployed economically, which drives demand for more capable models, which requires architectural evolution.

Key Findings: What Has Actually Been Validated

The paper's value is in its synthesis. Rather than just listing every paper, it identifies which claims have been validated across multiple independent groups and which remain contested.

Architecturally validated:

- FlashAttention and its successors (Flash Attention 2, 3) are now table stakes. Any production transformer that doesn't use attention optimization is uncompetitive

- Grouped Query Attention (GQA) consistently improves inference efficiency with minimal quality loss — now standard in virtually all new large model releases

- MoE architecture at scale produces quality-per-active-parameter advantages that are robust across multiple implementations (Mistral, Llama 4, Qwen3)

- SSM-Transformer hybrids work in practice, not just theory — Jamba and Hunyuan provide production validation

Training methodology validated:

- RLHF remains foundational; DPO is a viable cheaper alternative for preference fine-tuning but not a full replacement

- GRPO (from DeepSeek-R1) has been replicated by multiple groups and validated as effective for verifiable reasoning tasks

- Multi-stage post-training (SFT → preference → long-format → domain-specific) consistently outperforms single-stage approaches

Inference optimization validated:

- 8-bit quantization (INT8) is essentially lossless for most applications; 4-bit (INT4) is acceptable for inference with minor quality loss

- Speculative decoding reliably improves throughput for autoregressive generation with compatible model pairs

- KV cache management (eviction, compression, quantization) is production-critical at long context lengths

Agentic patterns — more contested:

- ReAct (Reason + Act) loop is the dominant agentic pattern but shows reliability degradation on long-horizon tasks

- RAG is validated for knowledge retrieval; performance on multi-hop reasoning over retrieved content varies significantly

- Multi-agent coordination patterns remain largely research demonstrations without robust production deployments at scale

The Scaling Wall Analysis

The paper's most interesting contribution may be its treatment of the "scaling wall" problem. The original scaling laws (Kaplan et al., Hoffmann/Chinchilla) predicted continuous improvement with scale. The field has hit friction points that the original laws didn't fully account for:

Data exhaustion: Models trained to Chinchilla-optimal levels on internet-scale text corpora are running out of novel high-quality training data. Synthetic data (from models like Gemini and GPT-4) partially addresses this but introduces distribution questions.

Diminishing returns on pure parameter scaling: Models beyond ~405B parameters show diminishing quality improvements per additional parameter. The field has responded with MoE (more parameters, same inference cost) and data quality improvements rather than raw parameter increases.

The reasoning capability ceiling: For domains without verifiable ground truth, RL-based training hits walls because there's no signal to optimize toward. The paper identifies this as the most significant near-term constraint on reasoning model progress.

Energy and cost limits: Training frontier models costs tens to hundreds of millions of dollars. The concentration of frontier capability in a small number of organizations is partly an economic phenomenon, not just a technical one.

The Agentic Gap

The taxonomy identifies a clear gap between the state of foundational LLM capabilities and what is needed for robust agentic systems. LLMs are now excellent at:

- Generating plausible, coherent text

- Solving closed-form problems (math, code) given extended reasoning

- Following complex instructions for single-turn tasks

- Retrieving and synthesizing information from given context

LLMs remain weak at:

- Long-horizon planning with error recovery

- Reliable tool use in adversarial environments

- Maintaining coherent goals and state across very long agent episodes

- Self-assessment of when their outputs are likely wrong

This gap is the central engineering challenge for building reliable AI agents in 2026. The paper describes it honestly without overstating progress.

Why This Matters

1. Practitioners need maps, not just territory reports. The LLM literature is vast and poorly organized. LLMOrbit provides the systematic organization that makes individual papers more comprehensible in context. If you're explaining the LLM landscape to a team lead or a board, this paper gives you the vocabulary.

2. The agentic gap analysis is practically useful. Knowing specifically what LLMs can and cannot reliably do in agentic settings helps you design systems that compensate for known weaknesses rather than discovering them in production.

3. The "validated vs. contested" framing is intellectually honest. Too many survey papers treat every claim as equally well-established. LLMOrbit distinguishes between "replicated by multiple groups" and "single-paper result" — a distinction that matters enormously for engineering decisions.

4. The scaling wall analysis clarifies where the industry goes next. If raw parameter scaling has diminishing returns and data is exhausted, the next improvements must come from architectural efficiency, training methodology, and — most speculatively — fundamentally new approaches to reasoning and knowledge representation.

My Take

Survey papers are undervalued. The research community rewards novelty; surveys don't produce novelty, they produce clarity. But for practitioners, clarity about what has been established is often more valuable than a new experimental result.

LLMOrbit is genuinely useful for three audiences. For engineers: it tells you what architectural and inference choices are validated and which are still experimental. For researchers: it maps the open problems with intellectual honesty about what's unsolved. For executives and product leaders: it provides a structured vocabulary for understanding AI capability and limitation that doesn't require reading 200 individual papers.

My primary criticism: the agentic applications section is weaker than the architecture and training sections. This reflects the field — we have much more empirical clarity about what works in training than in deployment — but I'd have appreciated more specific characterization of which agentic failure modes are tractable versus fundamental.

What I find most valuable is the paper's intellectual courage in describing the scaling wall honestly. The narrative from AI labs and investors has been uniformly optimistic about continued exponential capability growth. LLMOrbit acknowledges the friction points without being pessimistic — it frames them as engineering challenges to be solved, not fundamental limits. That balance is exactly right.

The field needs more papers that take stock of what has been established and where the genuine uncertainty lies. LLMOrbit contributes to that project at exactly the right moment — early 2026, after two years of extraordinary development, with a community that needs to consolidate its understanding before deciding where to focus next.

arXiv:2601.14053 — read the full paper at arxiv.org/abs/2601.14053