Paper: Large Language Model Reasoning Failures arXiv: 2602.06176 | February 2026 Authors: Peiyang Song et al. (3 authors)

The LLM benchmarking ecosystem is obsessed with what models can do at their best. MATH-500 scores, GPQA Diamond performance, HumanEval pass rates — all of these measure peak capability on carefully curated problems designed to be challenging but solvable. They tell you what the model can do when everything goes right.

What they don't tell you is what happens when things go wrong — and more importantly, how they go wrong. That's the question this paper addresses head-on.

Published in February 2026, this survey by Song et al. is, as the authors note, the first comprehensive survey dedicated specifically to reasoning failures in LLMs. Not limitations, not capability gaps — active failure modes, the ways in which LLM reasoning produces outputs that are confidently wrong, structurally incoherent, or misleading in systematic ways.

For anyone deploying LLMs in reasoning-critical applications, this paper should be required reading.

The Core Framework: Embodied vs. Non-Embodied Reasoning

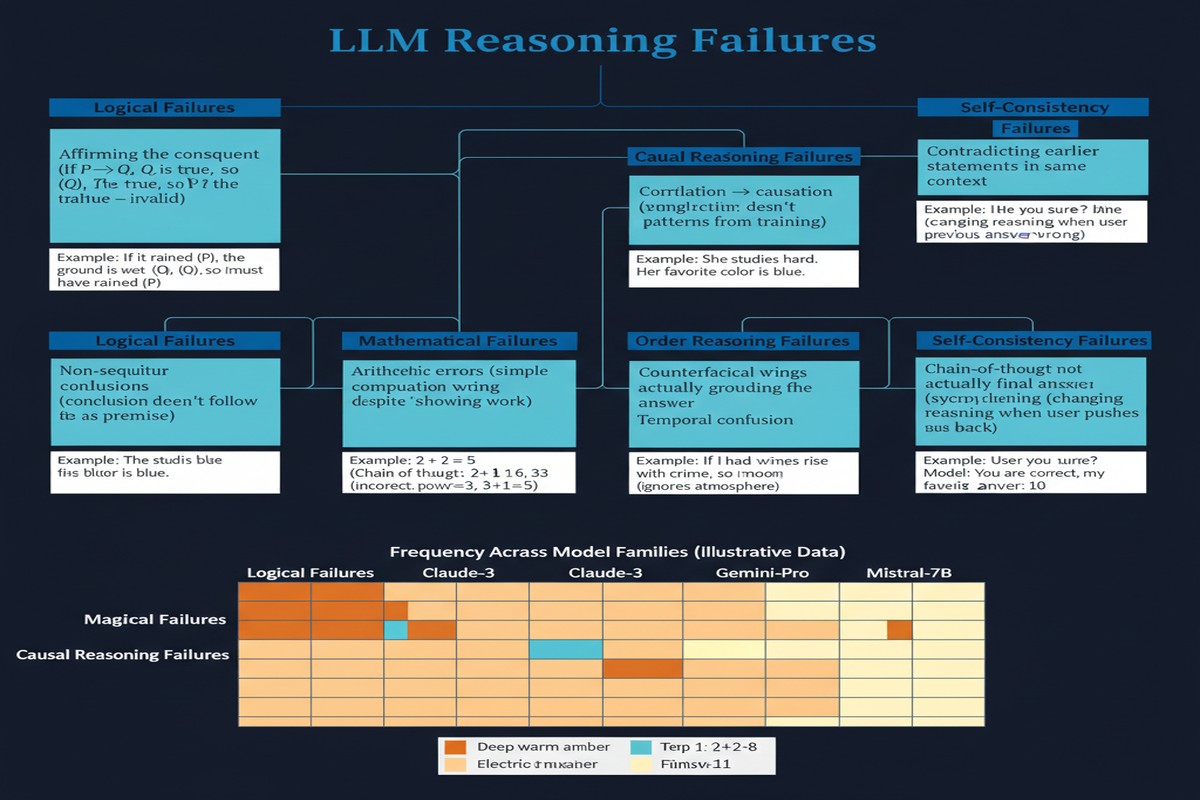

The paper's primary taxonomic contribution is a distinction that I find genuinely illuminating: the separation of reasoning failures into embodied and non-embodied categories.

Non-embodied reasoning failures are failures in reasoning about abstract or symbolic domains — mathematics, logic, formal proofs, code correctness. These are the kinds of failures that show up on traditional reasoning benchmarks. The model gets the wrong answer to a math problem, makes an invalid logical inference, or produces code with a subtle bug.

Embodied reasoning failures are failures in reasoning about the physical world — spatial relationships, causal physical processes, temporal sequences in real environments, common-sense physics. These don't show up on standard benchmarks because those benchmarks don't probe physical world reasoning directly.

The distinction matters because the failure modes, and the mitigations, are different in the two categories.

graph TD

RF[LLM Reasoning Failures]

RF --> NE[Non-Embodied Reasoning]

RF --> EM[Embodied Reasoning]

NE --> M[Mathematical Failures\nArithmetic errors\nAlgebra mistakes\nProof invalidation]

NE --> L[Logical Failures\nInvalid inference\nScope confusion\nNegation errors]

NE --> C[Causal Failures\nCorrelation→causation\nReverse causality\nConfounding]

NE --> CG[Code/Formal Failures\nSpec misunderstanding\nEdge case blindness\nUnsound proofs]

EM --> S[Spatial Failures\nLayout errors\nNavigation mistakes\nGeometric confusion]

EM --> P[Physical Failures\nPhysics intuition errors\nMaterial property mistakes]

EM --> T[Temporal Failures\nSequence errors\nDuration estimation\nEvent ordering]

style M fill:#ffcccc

style L fill:#ffcccc

style C fill:#ffcccc

style CG fill:#ffcccc

style S fill:#cce5ff

style P fill:#cce5ff

style T fill:#cce5ff

The Seven Failure Classes

Within this framework, the paper identifies seven specific failure classes:

Class 1: Mathematical Reasoning Failures

These are the best-studied failures — arithmetic errors, algebraic manipulation mistakes, counting errors, and failure to recognize when a mathematical approach is inapplicable. The paper notes that LLMs exhibit a distinctive pattern: they're often better at multi-step calculations than at single-step arithmetic when the single step involves less common operations. This suggests the failure is not computational in the traditional sense but pattern-matching: the model executes a learned procedure that fails on out-of-distribution inputs.

Class 2: Logical Inference Failures

Failures in formal logical reasoning: invalid deductive inferences, mistakes about quantifier scope ("all X are Y" does not imply "all Y are X"), errors with negation and conditional logic. A particularly interesting finding: models often perform better on formal logic when it's presented as natural language reasoning than as symbolic logic, suggesting that the relevant skill is linguistic pattern matching rather than formal symbolic manipulation.

Class 3: Causal Reasoning Failures

LLMs systematically conflate correlation with causation, reverse causal directions, and fail to reason correctly about confounding variables and mediating factors. This is particularly dangerous in applied contexts — a model that reasons about medical interventions, economic policies, or organizational decisions using spurious causal models can produce plausible-sounding recommendations that are systematically wrong.

Class 4: Code and Formal Specification Failures

When models reason about code — tracing execution, identifying bugs, predicting outputs — they exhibit specific failure patterns: missing edge cases (off-by-one errors, empty input handling, overflow conditions), misunderstanding specifications (confusing what a function should do with what it does), and incorrectly predicting the behavior of code involving state (mutations, side effects, concurrency).

Class 5: Spatial Reasoning Failures

Models struggle with tasks involving spatial layout, navigation, and geometric relationships. They perform better on spatial tasks described in canonical orientations (north/south/east/west) than in relative orientations (left/right/ahead/behind), and fail systematically on tasks requiring mental rotation or 3D spatial inference from 2D descriptions.

Class 6: Physical Intuition Failures

Physical reasoning — predicting how objects behave under forces, reasoning about material properties, estimating physical quantities — is a consistent weak point. The paper presents compelling examples where models produce physically implausible outputs (objects floating, impossible force distributions) despite the physical constraints being unambiguous in the problem statement.

Class 7: Temporal Reasoning Failures

Sequence ordering, duration estimation, and event timeline reasoning are failure-prone. Models systematically underestimate durations for unfamiliar activities, overestimate durations for routine activities, and make systematic errors when temporal reasoning involves multiple interleaved event streams.

The Structural Pattern

Across all seven failure classes, the paper identifies a common structural pattern: LLM reasoning failures are often locally fluent but globally incoherent.

Individual reasoning steps look plausible. The prose is well-formed. The terminology is correct. The format is appropriate. But somewhere in the chain, a wrong assumption was made, a constraint was violated, or an inference step was invalid — and the model continues confidently from that wrong step without backtracking.

This pattern is particularly dangerous in high-stakes applications because the appearance of correct reasoning is maintained even as the substance goes wrong. Human reviewers reading a model's reasoning trace must be specifically watching for the error type, not just assessing overall plausibility.

Why This Matters

Reasoning failures are not random. The seven-class taxonomy reveals that LLM reasoning failures are systematic — they cluster around specific types of reasoning in specific contexts. This is important because it means you can design systems that are specifically robust to the failure types that matter most in your application.

Mitigation strategies are failure-class-specific. Mathematical failures respond to verification (run the calculation again, check with a calculator). Causal reasoning failures respond to structured causal model prompting. Spatial failures respond to decomposition into canonical orientation descriptions. There is no single prompt engineering strategy that addresses all failure classes — you need to know which class you're most exposed to.

The embodied/non-embodied distinction has deployment implications. If you're deploying an LLM in a physical world context — robotics, smart building management, autonomous systems — embodied reasoning failures are your primary concern, and they're the least well-addressed by current evaluation methodology. Most teams deploying LLMs for physical applications are not specifically testing for spatial and physical intuition failures.

Benchmark design needs to evolve. The paper implicitly critiques the evaluation ecosystem. If you only evaluate on non-embodied reasoning benchmarks, you're missing half the failure surface. A model that achieves 90% on MATH and 85% on GPQA might be completely unreliable at spatial reasoning — and you'd never know from standard benchmarks.

My Take

I've been doing applied work with LLMs across multiple domains for several years, and the systematic nature of reasoning failures is one of the most important practical lessons I've absorbed. When an LLM fails on a reasoning task, it's almost never because of random noise — there's usually a specific category of error that the task activated. Recognizing the category is the key to knowing whether to trust the model's output in similar contexts.

The seven-class taxonomy in this paper is the most organized version of this insight I've seen. What I particularly appreciate is the separation of embodied from non-embodied reasoning. Most practitioners I work with think of LLM reasoning failures as a single phenomenon. They're not — the failure modes, the triggers, and the mitigations are genuinely different, and treating them as unified leads to both over-reliance (the model is great at abstract math, so I trust it on physical reasoning without checking) and under-reliance (the model made a spatial error, so I distrust its logical reasoning).

My practical recommendation: before deploying any LLM in a reasoning-critical application, identify which of the seven failure classes are most relevant to your use case, create diagnostic test cases specifically targeting those classes, and establish failure rate thresholds before deployment. Don't rely on generic benchmarks — they're optimized to measure peak capability, not failure modes.

The paper's contribution is identifying and organizing the failure taxonomy. The application of that taxonomy to deployment engineering is still largely the practitioner's responsibility. This post is my attempt to start that bridge.

If you're serious about building reliable AI systems, read this paper. The field has spent years studying what LLMs can do. It's time we spent equal energy on what they reliably cannot.

arXiv:2602.06176 — read the full paper at arxiv.org/abs/2602.06176

Explore more from Dr. Jyothi