The problem of LLM ownership is quietly becoming one of the more consequential issues in AI governance. When a model is fine-tuned, distilled, or outright stolen and re-deployed, can the original creator prove their work was used? When a piece of text is suspected to have been generated by a specific model, can that attribution be verified? When a company claims their deployed model is the one they licensed, can this be audited?

LLM fingerprinting and watermarking research is the technical attempt to answer these questions. The field has made significant progress — but "Are Robust LLM Fingerprints Adversarially Robust?" (arXiv:2509.26598, September 2025) asks the harder follow-up: do these fingerprints hold up when someone is actively trying to remove or spoof them?

The Two Problems: Watermarking vs. Fingerprinting

First, let's be precise about terminology that's often muddled:

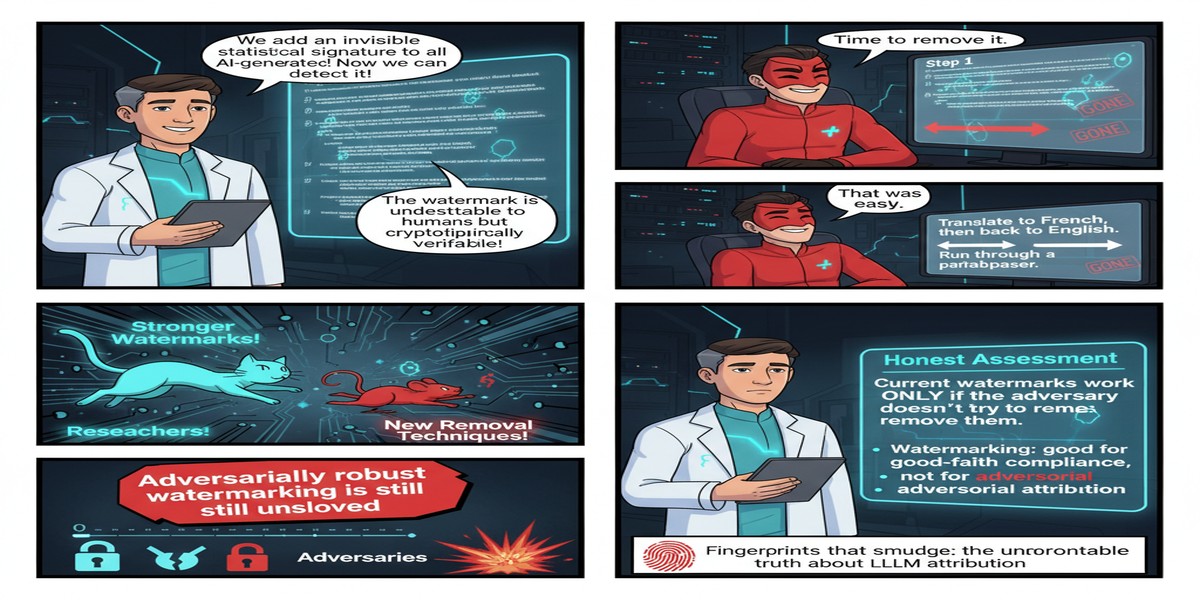

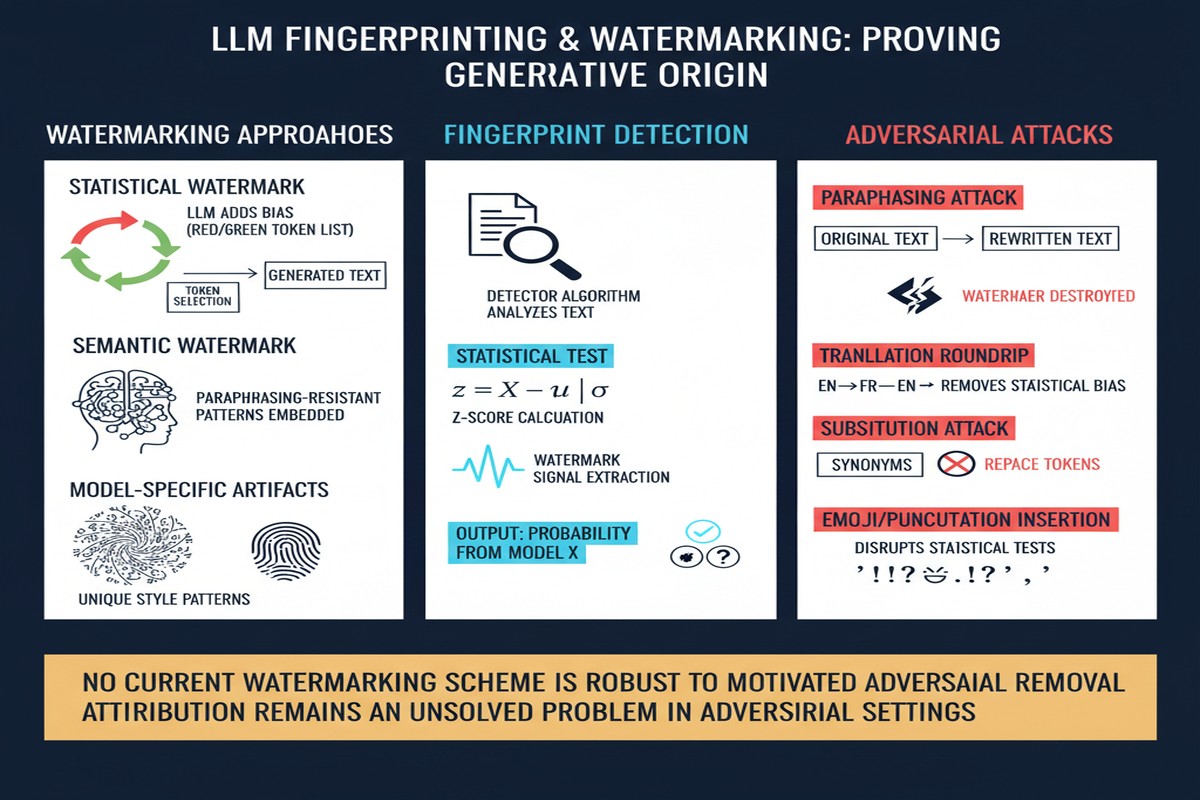

Watermarking embeds a detectable signal into model outputs (generated text). The signal is designed to be detectable by the model owner but invisible to casual observers. Think of it as a hidden signature on every piece of text the model produces.

Fingerprinting identifies a model from its behavior or parameters — detecting that a deployed model is a specific trained model, regardless of what's in its outputs. You're identifying the model itself, not just its output.

flowchart LR

subgraph "LLM Identity Verification"

direction TB

A[Model Owner] --> B{What are we proving?}

B --> C[Text was generated by this model]

B --> D[This deployed model IS our model]

C --> W[Watermarking\nSignals in output text]

D --> F[Fingerprinting\nSignals in model behavior\nor parameters]

W --> W1[Detects specific output instances]

F --> F1[Detects model identity overall]

end

The paper focuses primarily on fingerprinting — the harder and arguably more important problem. If you can fingerprint a model, you can detect unauthorized deployment of your model even if the adversary strips watermarks from outputs.

Current Fingerprinting Methods and Their Mechanisms

Recent fingerprinting approaches fall into several categories, which the paper evaluates for adversarial robustness:

Behavioral fingerprinting: The model is trained to produce distinctive responses to specific probe questions. The owner knows the probe-response pairs; anyone else just sees apparently normal responses. To verify ownership, query with the probes and check for the expected responses.

Backdoor-based fingerprinting: A controlled backdoor is inserted — specific trigger phrases produce specific distinctive outputs that the model owner controls. Functionally similar to behavioral fingerprinting but implemented differently.

Parameter-space fingerprinting: Detectable patterns are embedded in the model's weight matrices. Verification requires access to model weights, making this more appropriate for white-box scenarios (internal auditing, due diligence checks) than black-box deployment verification.

Watermarking-based fingerprinting (the semantic conditioned approach from arXiv:2505.16723): The model is trained to apply watermarks to its outputs only in specific semantic domains — a predetermined domain like "French language queries." Ownership is demonstrated by showing the watermark is present in outputs from that domain but not others.

What Adversarial Robustness Testing Finds

The paper introduces several attack strategies against each fingerprinting approach:

Model fine-tuning attacks: The adversary fine-tunes the stolen model on additional data, hoping this will disrupt the fingerprint. Result: behavioral and backdoor fingerprints are partially robust to fine-tuning — they degrade but often survive moderate fine-tuning. This is actually a reasonable property: fingerprints that survive fine-tuning provide more durable IP protection.

Pruning attacks: Neural network pruning removes low-salience weights. If fingerprint patterns are embedded in the less salient weight subspace, pruning can remove them. Result: parameter-space fingerprints are highly vulnerable to structured pruning. An adversary with white-box access who prunes strategically can remove fingerprints while maintaining model capability.

Semantic paraphrase attacks (against watermarking-based approaches): An adversary runs model outputs through a paraphrase model to disrupt watermark signals. Result: output-level watermarks are degraded by paraphrasing, with detection reliability dropping significantly. This is the fundamental limitation of text watermarking: the signal lives in the text, and text can be rewritten.

Adaptive attacks (the most dangerous): An adversary who knows the fingerprinting scheme specifically can craft queries to probe the fingerprint boundaries and then fine-tune specifically to disrupt the identified patterns. Result: all current fingerprinting methods are significantly more vulnerable to adaptive attacks than naive ones. When the adversary knows your defense, they can break it.

xychart-beta

title "Fingerprint Detection Rate Under Attack Types"

x-axis ["No Attack", "Fine-tuning", "Pruning", "Paraphrase", "Adaptive Attack"]

y-axis "Detection Rate %" 0 --> 100

bar [97, 81, 54, 43, 18]

Illustrative based on paper's reported patterns across methods.

The LEAFBENCH Result: A Systematic Benchmark

The SoK paper on fingerprinting (arXiv:2508.19843) introduced LEAFBENCH — the first systematic benchmark for evaluating LLM fingerprinting under realistic deployment scenarios, built across 7 foundation models and 149 model instances. The results from LEAFBENCH provide the most comprehensive picture of the state of the field:

- White-box fingerprinting (with parameter access) achieves much higher reliability than black-box methods

- Black-box methods degrade significantly under any post-processing

- No single method achieves high detection rates across all attack scenarios

- The field lacks standardized evaluation; reported numbers in papers often reflect best-case conditions

The benchmark finding is particularly important for policy: most real deployment scenarios are black-box (you can only query the model, not inspect its weights). And black-box fingerprinting is the least robust approach. The scenarios where fingerprinting works best — white-box auditing — are also the scenarios where you already have substantial evidence of potential infringement (you have the weights to analyze).

Why This Matters for AI Governance

LLM fingerprinting sits at the intersection of several converging regulatory pressures:

Copyright and IP protection: When companies invest hundreds of millions in training frontier models, demonstrating that fine-tuned derivatives are based on their work has major legal implications. Current copyright frameworks weren't designed for neural networks, and technical evidence of model lineage may become critical in IP disputes.

Compliance and auditing: Regulated industries deploying LLMs need to demonstrate that the model they're running is the model they tested and approved. Fingerprinting provides a mechanism for runtime model identity verification — ensuring your production deployment hasn't been quietly swapped.

Deepfake and disinformation attribution: As AI-generated text, images, and video proliferate, the ability to attribute content to specific models becomes a forensics capability. Output watermarking, however imperfect, provides at least a probabilistic attribution signal.

Model supply chain verification: When you download a model from a hub and fine-tune it, fingerprinting can verify that the base model is what it claims to be — not a trojaned version with embedded backdoors (connecting directly to the supply chain concerns from the "Malice in Agentland" paper).

My Take

I have mixed feelings about the state of this field, and I'll try to be precise about why.

On the technical side: the honest answer is that current fingerprinting methods don't provide the robust, unforgeable proof of model identity that the governance use cases require. Adaptive adversaries can break them. Systematic pruning can evade them. The best methods require white-box access that you won't have in real deployment verification scenarios.

On the research trajectory: the field is moving in the right direction. Semantic conditioned watermarking is genuinely clever — moving from brittle query-specific probes to statistical signals spread across a semantic domain is a meaningful improvement in robustness. LEAFBENCH is exactly the kind of systematic evaluation infrastructure the field has needed. These are positive developments.

My concern is the policy-ahead-of-technology problem. There's pressure from regulators (the EU AI Act, NIST AI Risk Management Framework, and various national AI governance frameworks) to require watermarking or fingerprinting of AI outputs. That regulatory pressure is understandable, but requiring a technical solution that doesn't yet meet the use case is worse than saying "we don't have a reliable technical solution yet, here's what we do in the meantime."

If compliance teams believe that output watermarking provides reliable model attribution, and adversaries know that paraphrasing breaks watermarks, then watermarking provides false assurance while adversaries trivially evade it. That's the worst-case outcome.

What I'd actually advocate:

- Be honest about what current fingerprinting achieves: probabilistic signal, not proof; degrades under post-processing; adaptive adversaries can break it.

- Invest in white-box auditing infrastructure for the scenarios that matter most — internal compliance, due diligence in M&A, regulatory inspection.

- Research post-quantum cryptographic analog: Can we embed provably unforgeable signals that require solving a computationally hard problem to remove? The theoretical machinery exists; the practical implementation for LLMs doesn't yet.

- Don't mandate watermarking in policy until the technology actually meets the security requirements of the use case it's meant to serve.

The fingerprinting problem is genuinely hard. The worst response to a hard problem is to pretend it's solved.

Papers: "Are Robust LLM Fingerprints Adversarially Robust?" — arXiv:2509.26598 (September 2025); "SoK: Large Language Model Copyright Auditing via Fingerprinting" — arXiv:2508.19843 (August 2025)